LlamaIndex 2026: Build Your Private AI Knowledge Base (RAG)

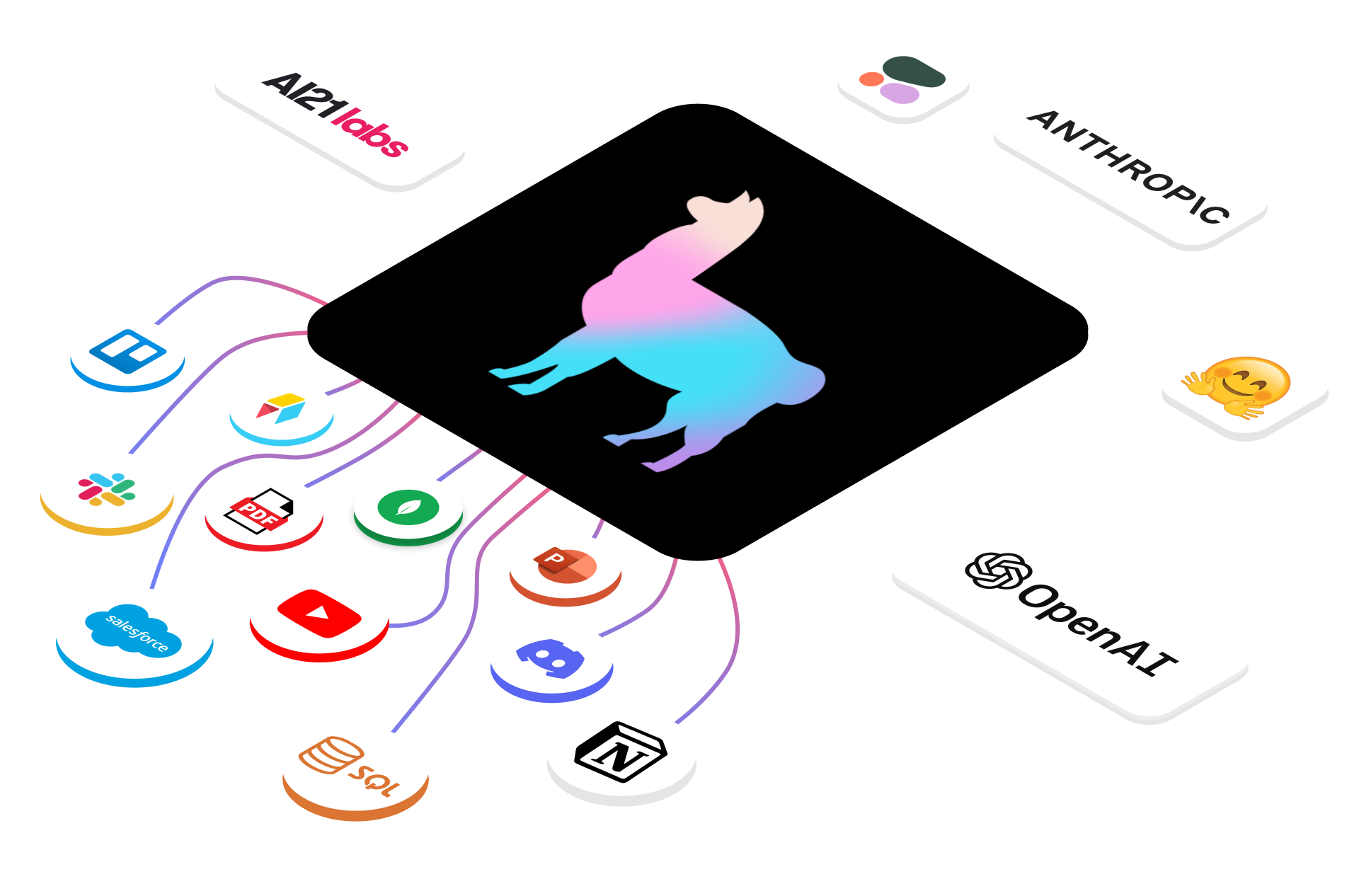

More than just connecting data—LlamaIndex is the ultimate framework for RAG applications. From PDFs to SQL database queries, let AI truly understand your data.

If LangChain is a Swiss Army knife, then LlamaIndex is a precision surgical instrument—especially for operating on data.

When building RAG (Retrieval-Augmented Generation) applications, efficiently processing, indexing, and retrieving data is the biggest pain point. LlamaIndex (formerly GPT Index) focuses on this, enabling LLMs to read your private data (PDFs, Notion, SQL, APIs) as easily as reading memory.

What is LlamaIndex?

LlamaIndex is a data framework for connecting custom data sources to large language models.

It solves three core problems:

- Ingestion: How to ingest diverse data (Lark docs, Slack records, PDFs)?

- Indexing: How to split, vectorize, and store this data?

- Querying: How to precisely find the exact segment AI needs from massive data?

Environment Setup

1. Installation

pip install llama-index2. Configure OpenAI Key

LlamaIndex uses OpenAI’s embedding model and LLM by default.

export OPENAI_API_KEY="sk-proj-xxxxxxxx"Hands-On: “Chat with PDF” in 5 Lines of Code

Suppose you have a sample.pdf (like a product manual), and we want AI to answer questions based on it.

- Create a folder called

dataand putsample.pdfinside. - Write a Python script:

from llama_index.core import VectorStoreIndex, SimpleDirectoryReader

# 1. Load data

documents = SimpleDirectoryReader("data").load_data()

# 2. Build index (auto-split, embed, store in memory)

index = VectorStoreIndex.from_documents(documents)

# 3. Create query engine

query_engine = index.as_query_engine()

# 4. Ask a question

response = query_engine.query("What's the warranty period mentioned in this manual?")

print(response)What happened?

SimpleDirectoryReaderautomatically parsed the PDF text.VectorStoreIndexcalled the OpenAI Embedding API to convert text to vectors.queryfirst searches for the most similar paragraphs in the vector store, then packages the paragraphs + question to GPT-4, and finally generates an answer.

Advanced: Agentic RAG

In 2026, RAG is no longer simple “retrieve-and-answer”—it’s Agents with reasoning capabilities. LlamaIndex provides powerful Agent interfaces.

Imagine a scenario: You need AI to analyze a complex financial report. Regular RAG might give wrong answers due to fragmented document splitting. LlamaIndex’s ReAct Agent can:

- First search for “Q4 2025 revenue.”

- Discover the data is in a table, then call the “Pandas Query Engine” tool.

- Calculate growth rate.

- Synthesize conclusions.

from llama_index.core.agent import ReActAgent

from llama_index.core.tools import FunctionTool

# Define a calculation tool

def time_travel_tax_calculator(year: int, income: float) -> float:

"""Calculate future taxes"""

if year > 2030:

return income * 0.5

return income * 0.2

tool = FunctionTool.from_defaults(fn=time_travel_tax_calculator)

# Create Agent

agent = ReActAgent.from_tools([tool, ...], verbose=True)

agent.chat("If I earn 1 million in 2032, how much tax do I pay?")Why Choose LlamaIndex Over LangChain?

They’re not mutually exclusive—they’re often used together. But if your application is data-heavy (e.g., processing millions of documents, complex knowledge graph retrieval), LlamaIndex’s advanced retrieval strategies (like Hierarchy Index, Sentence Window Retrieval) perform much better than LangChain’s defaults.

Summary

- LangChain: Excels at workflow orchestration, highly versatile.

- LlamaIndex: Excels at data RAG, high retrieval precision.

Recommendation: Use LlamaIndex for data indexing and retrieval, use LangChain (or LlamaIndex native Agents) for higher-level interaction logic.

In this era where data is an asset, LlamaIndex is the shovel that helps you mine the data gold.